Back

Back

industry

Back

Back

AI regulation is no longer a future concern for compliance teams. It is already showing up in daily work.

Across the Regology platform, requests related to AI governance, risk, and regulatory interpretation have steadily increased. At the same time, informal adoption is accelerating faster than formal oversight structures can keep up with.

The result is a familiar dynamic: AI is being used within organizations, but not always governed.

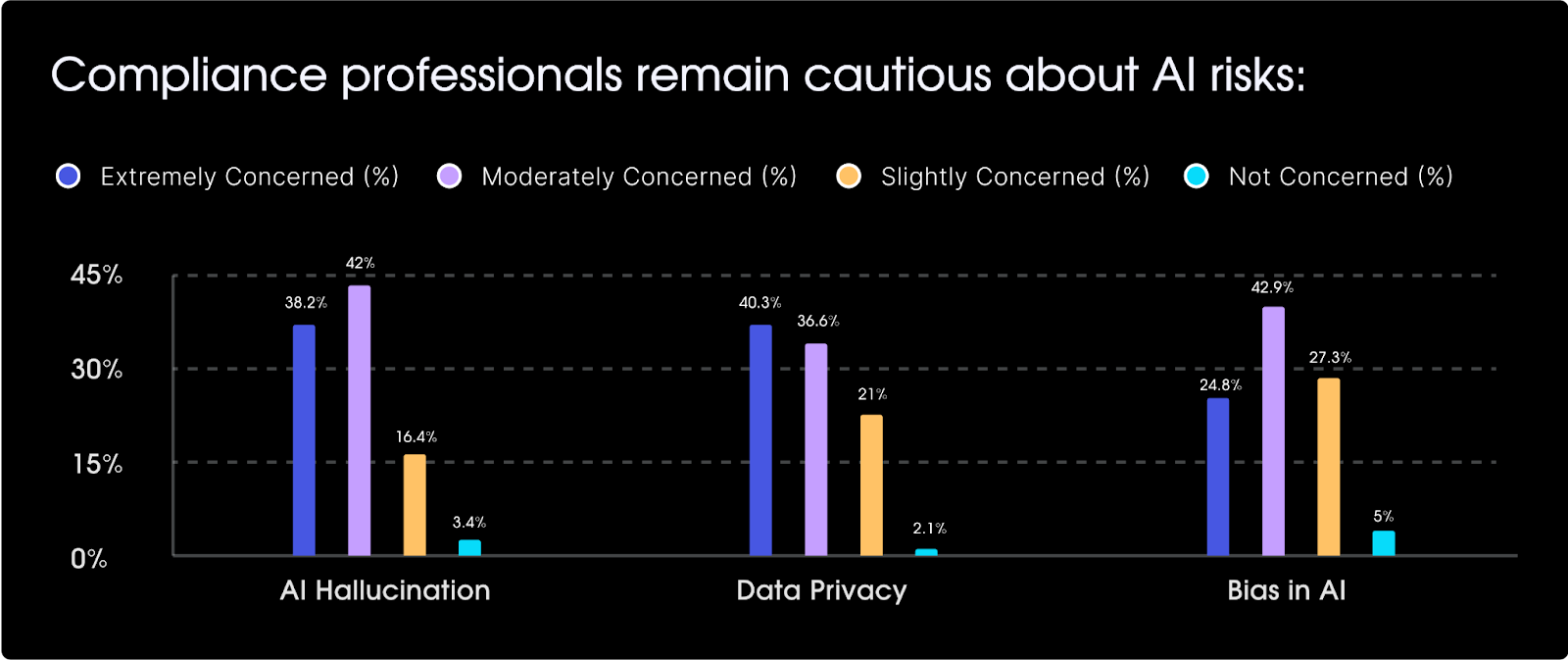

Regology’s 2026 survey data shows that nearly 39% of organizations still lack a formal AI risk review process, even as tools like ChatGPT and Copilot are being used informally across workflows. Compliance teams are acutely aware of the risks—accuracy, privacy, and confidentiality consistently rank as top concerns—but comprehensive organizational awareness has not yet translated into structured governance.

What’s missing is clarity.

Many organizations are actively trying to understand how to govern AI use across internal teams, vendors, and external partners. There is strong internal advocacy, increasing vendor activity, and growing executive attention.

But regulatory guidance remains limited and open to interpretation. Federal agencies have issued directives and states are beginning to act. Yet, for many organizations, the path forward still feels uncertain. At the same time, there is no disagreement about where things are heading, and that stronger regulation is coming eventually at both the federal and state levels.

That combination—rising adoption, incomplete guidance, and inevitable regulation—is what is driving urgency.

The instinct in moments like this is to wait for clearer rules. But in practice, that delay creates risk.

AI is already being used to manage growing volumes of regulatory change, operational complexity, and administrative workload. The organizations making the most progress are not waiting for definitive regulation. They are building governance frameworks now, grounded in existing guidance, adaptable to future change, and tied directly to how their organization operates.

One of the most consistent patterns Regology sees across organizations is a move toward principle-based governance frameworks. Rather than anchoring governance to specific tools or use cases, leading teams are defining durable principles that can be applied across evolving technologies.

For healthcare organizations, for example, these principles often align with existing regulatory expectations and organizational priorities, including:

This approach is not new. It mirrors how healthcare organizations successfully navigated earlier waves of innovation, such as mobile health applications, text-based communication, and patient portals. The technologies changed quickly, but the underlying principles remained stable are governance signposts.

AI should be approached in the same way—as an evolving capability that requires consistent guardrails, not to be managed via one-off disconnected rules.

Regulatory guidance already supports this direction. Recent examples such as Executive Order 14179, NIST 2023 AI Risk Management Framework, and emerging state-level legislation, such as the Texas Responsible AI Governance Act and the Colorado AI Act, reinforce the importance of principled oversight tied to real-world outcomes.

The goal of iterative governance development is not to anticipate every possible use case. It is to define a framework that holds as those use cases evolve.

No AI governance framework will be complete at launch. Organizations that treat governance as a static, one-time effort will quickly fall behind. The more effective approach is to design for iteration.

This means treating governance as a series of planned structured releases, each building on the last. Core components—data usage policies, record retention standards, and audit protocols—can be phased in over time based on priority and risk.

Just as importantly, governance must evolve in sync with infrastructure and operations. When one advances significantly faster than the others, gaps emerge: Governance that is too far ahead becomes theoretical; governance that lags becomes reactive.

Iteration also requires continuous awareness. As regulatory expectations evolve, organizations need a clear view of what is changing and how it applies to their framework. Regology supports this by tracking regulatory developments across AI-related topics and surfacing relevant updates as they emerge.

The key is not speed for its own sake. It’s alignment.

One of the most important mindset shifts is how governance is positioned internally.

If governance is framed purely as a compliance checkpoint, it will slow adoption and create friction. If it is positioned as an enabler of responsible innovation, it becomes a strategic asset.

Healthcare organizations are already seeing this dynamic play out. In areas such as health equity, asynchronous patient communication, and interoperability, progress has depended on governance frameworks that enabled (not blocked) new capabilities.

AI is no different. A well-designed governance framework allows organizations to move faster with confidence, knowing that guardrails are in place and risks are being managed.

Like any cross-functional initiative, AI governance succeeds or fails based on ownership.

Ambiguous ownership is one of the most common reasons governance efforts stall. When responsibility is unclear, decisions are delayed, frameworks remain incomplete, and adoption proceeds without consistent oversight.

Leading organizations are assigning clear executive ownership for AI governance, ensuring that it has both visibility and accountability at the highest levels. These leaders are responsible not only for defining the framework but for maintaining momentum and keeping governance aligned with regulatory developments, operational needs, and strategic priorities.

Regology supports this role by providing a summarized analysis of AI-related regulatory developments, helping leaders stay focused and aligned with evolving industry expectations.

There is no single “correct” AI governance framework, but there is growing alignment around a set of foundational principles:

AI governance is not a future problem. It is a present and on-going responsibility—one that is expanding alongside adoption, regulatory attention, and operational reliance on AI tools.

Organizations that wait for perfect clarity will find themselves reacting. Those that build principled, adaptable frameworks now will be better positioned to manage risk, capture value, and respond as regulation continues to evolve.